The Assumption

Every major model release in the past year has led with context window size. 128K tokens. 200K. A million. The implicit message: more context is better. Dump your codebase, your document corpus, your complete conversation history into the prompt, and the model will figure it out.

This assumption drives real architectural decisions. Teams skip building retrieval pipelines because the window is “big enough.” They concatenate entire documents instead of extracting relevant sections. They let conversation histories grow unbounded because truncation feels like throwing away information.

The evidence says this is wrong. Bigger context windows are a capability, but using them naively degrades quality, inflates costs, and slows responses.

Lost in the Middle

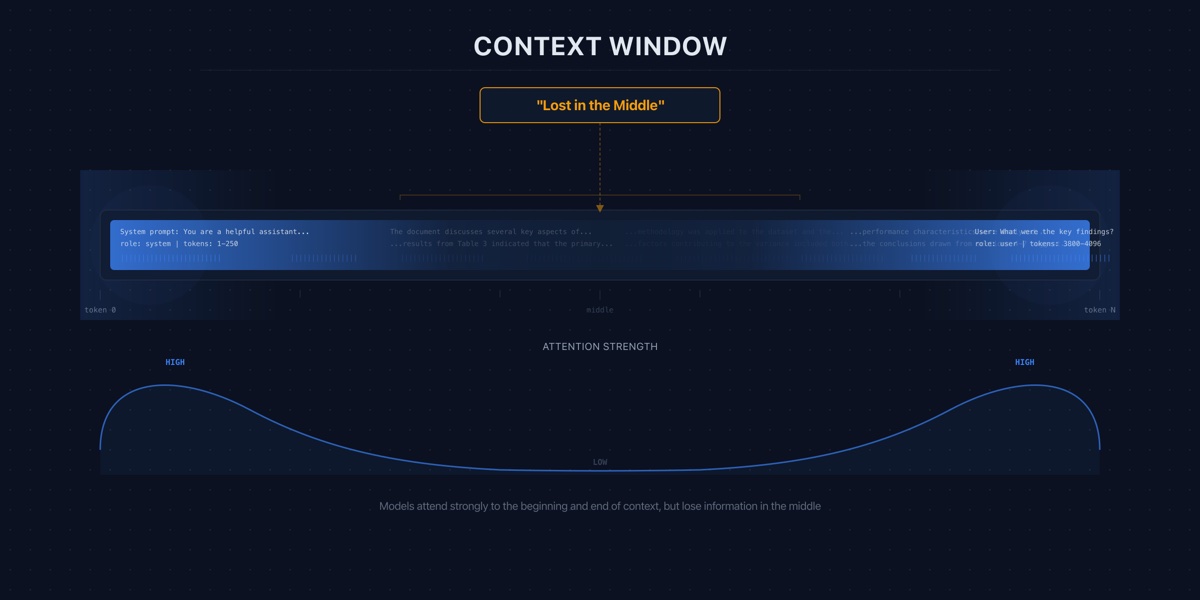

The most well-documented failure mode of long-context usage is positional bias. Models do not attend to all parts of their context equally.

Liu et al. demonstrated that when relevant information is placed in the middle of a long input, performance degrades significantly compared to when the same information appears at the beginning or end [S1]. Moving the answer from the top of the context to the middle can drop accuracy by 20 percentage points or more. The degradation follows a U-shaped curve: performance is highest near the beginning or end of the input, lowest in the middle [S1].

For production systems, this means document order matters — not just relevance. Place the most relevant content at the beginning of the context or immediately before the query at the end.

The deeper implication: stuffing more context into the window actively hurts when additional content pushes relevant information into the degradation zone. Adding a marginally relevant document can make the model less likely to use the highly relevant document already there.

Effective vs. Advertised Window

Positional bias is compounded by a more fundamental problem: advertised context windows vastly overstate usable capacity.

A systematic evaluation found that models fell short of their advertised maximum context by 99% [S2]. Performance degradation was observed as early as 1,000 tokens before the stated limit [S2]. A model advertising 128K tokens may produce degraded outputs well before that boundary.

The advertised number is a technical ceiling — the maximum tokens the model processes without erroring. The effective number is the context length at which the model still performs reliably on your task. The gap between them is far larger than most teams assume.

The practical response: benchmark your specific model on your specific task at the context lengths you actually use. A model that handles 32K tokens of well-organized code may fail at 16K tokens of unstructured meeting transcripts. Do not rely on advertised maximums.

RAG vs. Long Context

Given that long context degrades in practice, should teams abandon it for retrieval-augmented generation? The answer is more nuanced than either camp suggests.

Direct comparisons show that long-context approaches outperform RAG on average quality when relevant information is present and well-positioned [S3]. Giving the model everything eliminates retrieval errors — there is no risk of the retrieval step missing the right document.

But accuracy comes at significantly higher inference cost per query [S3]. A 100K-token prompt through a frontier model at $3.00 per million input tokens costs $0.30 per request. A RAG pipeline retrieving 2K relevant tokens costs $0.006 — a 50x difference in per-query inference spend, plus retrieval infrastructure.

The tradeoff resolves differently based on three variables.

Query volume. At hundreds of queries per day, the cost difference is negligible. At millions, cost dominates.

Information structure. If your corpus is well-structured and retrieval is reliable, RAG performs comparably at a fraction of the cost. If retrieval is unreliable, long context avoids retrieval failures through brute force.

Latency. Long context increases TTFT proportionally to input length. A 100K-token prompt takes roughly 25x longer to prefill than a 4K-token prompt. For latency-sensitive applications, this alone can disqualify long-context approaches.

For most production systems, the answer is RAG with carefully managed context — retrieve relevant information and insert it into a reasonably sized window, with attention to positioning.

Context Compression: The Middle Path

Between stuffing the full context and relying entirely on retrieval, there is a third option: compress before insertion.

LLMLingua achieves a 20x compression ratio with only 1.5% quality degradation on downstream tasks [S4]. A 100K-token context compresses to 5K tokens, reducing inference cost by 95% while retaining 98.5% of task performance.

Combined with retrieval, compression enables a pipeline where you retrieve broadly — pulling in more potentially relevant documents than a strict top-k — then compress the set before insertion. This reduces retrieval miss risk without paying the full cost of long-context inference.

Compression also mitigates the lost-in-the-middle problem. By removing redundant and low-information content, it increases the density of relevant information. The model has less noise to attend through, improving its ability to locate what matters.

The tradeoff is added pipeline complexity — a few hundred milliseconds of preprocessing — and a small, non-zero quality cost. For applications where every percentage point of accuracy matters, measure the impact on your specific task rather than relying on aggregate benchmarks.

Practical Context Management

Production teams should treat context window usage as a budget to allocate, not a capacity to fill.

Set a working limit well below the advertised maximum. If your model supports 128K tokens, design your system to operate within 32K-64K under normal conditions. This provides headroom and avoids the degradation zone [S2].

Control information positioning. Most relevant content goes at the beginning, right after system instructions. User query goes at the end. Never bury critical information in the middle [S1].

Measure quality as a function of context length. Build evaluation sets at different sizes — 2K, 8K, 32K, 64K tokens. If quality plateaus at 8K, every additional token is cost without benefit.

Compress retrieved context. If you retrieve more than 4K tokens per query, compression is likely cost-effective. A 40K retrieved context compresses to 2K tokens with minimal quality loss [S4].

Monitor context utilization in production. Track the distribution of actual context lengths. Most systems show a long tail: the majority of requests use far less context than the maximum, but a small percentage drive disproportionate cost and latency. Handle outliers through truncation, summarization, or routing to a different pipeline.

The Cost Trajectory

Inference costs are dropping at approximately 10x per year [S5]. Today’s expensive 100K-token query will be cheap in two years. But frontier pricing remains high enough that long-context usage at scale is cost-prohibitive for many applications today [S5].

More importantly, the quality degradation from positional bias and effective window limits is a model architecture problem, not a pricing problem. Cheaper tokens that the model ignores are still wasted tokens. Until models attend uniformly across their full context, managing what goes into the window will remain an engineering discipline — not a problem that falls away with scale.