Why Every Team Starts on Managed APIs

The math is seductive at prototype scale. A team building an LLM-powered feature signs up for an API key, writes a few integration calls, and ships a working demo inside a week. No GPU procurement. No model serving infrastructure. No hire required. GPT-4o costs $2.50 per million input tokens and $10.00 per million output tokens [S1]. Claude Sonnet runs $3.00 and $15.00 respectively [S2]. At a few thousand calls a day during development, the monthly bill is a rounding error.

Then the product launches.

The pricing was always linear — the team just never modeled it at production volume. A workload processing 10 million input tokens and 2 million output tokens per day on GPT-4o runs roughly $45 a day, or $1,350 a month. Manageable. Scale that tenfold — not unusual for a feature serving real user traffic — and the same API, same code, same prompts, costs over $13,000 a month. With Claude Sonnet at $15.00 per million output tokens [S2], output-heavy workloads at the same scale run higher still.

Nothing changed architecturally. The team discovered what linear pricing looks like at production volume. And by this point, the API dependency is wired into the deployment pipeline, the error handling, and the team’s operational habits.

This is where migration pressure begins.

The Three Migration Pressures

Migration pressure rarely starts with a spreadsheet. It starts with a legal review, a security incident, or a board-level question about vendor concentration. Cost amplifies the urgency, but it is seldom the trigger.

Privacy and Compliance

Even teams that can comfortably afford their API spend may be compelled to migrate by data residency requirements — and those requirements are tightening.

The major API providers have improved their data handling posture significantly. OpenAI does not use API data to train models by default and has not done so since March 2023. However, abuse-monitoring logs are generated for all API usage and retained for up to 30 days [S5]. Zero Data Retention is available but must be explicitly configured. For regulated workloads, the relevant question is not whether the provider trains on your data — it is whether your data traverses infrastructure you do not control, in a jurisdiction you did not choose.

For organizations subject to GDPR, sending production data containing personal information to a US-based API constitutes a cross-border transfer. Post-Schrems II, this requires Transfer Impact Assessments and supplementary safeguards beyond Standard Contractual Clauses [S6]. The EU-US Data Privacy Framework was upheld by the European General Court in September 2025, but it faces continued legal challenges and depends on US executive decisions that could shift its standing [S6]. Building a production system on the assumption that the current transfer framework will hold indefinitely is a bet, not a compliance strategy.

The enterprise response to these pressures became visible early. In 2023, three Samsung engineers inadvertently leaked confidential source code, meeting transcripts, and proprietary test sequences through ChatGPT — prompting Samsung to ban all external generative AI tools and begin developing internal alternatives [S8]. Multiple major financial institutions and technology companies imposed similar restrictions in the same period. These bans were not about model quality. They were about data control.

Self-hosting inference within your own jurisdiction eliminates third-party data processing entirely. For organizations where that matters, the migration decision is already made — regardless of cost.

Control and IP Ownership

Beyond compliance, there is a strategic case for owning the inference stack.

Fine-tuning on proprietary data requires access to model weights. Distilling a large model’s capabilities into a smaller, faster specialist requires the same. With managed APIs, neither is possible — you are constrained to whatever the provider exposes through its endpoint.

Latency and throughput become your team’s variables to optimize, not a vendor’s rate limit to work around. When an API provider deprecates a model version, changes pricing, or experiences an outage, your product absorbs the impact with no recourse.

The licensing landscape now supports this shift. Llama 3.1 and later versions are commercially usable and royalty-free. The output training restriction present in Llama 3.0 — which prohibited using model outputs to improve competing models — was removed in 3.1 [S10]. Organizations can fine-tune, distill, and deploy derivative models without licensing friction, provided they remain under the 700-million monthly active user threshold.

This is not a philosophical argument about openness. It is a risk assessment: building core product logic on a single provider’s API creates a dependency with no SLA on model continuity.

Margin

Then there is cost — the pressure that gets the most attention and is the least well understood.

The per-token gap between managed frontier APIs and open-weight alternatives is substantial. GPT-4o runs $2.50/$10.00 per million tokens (input/output) [S1]. Claude Sonnet runs $3.00/$15.00 [S2]. Llama 3.3 70B, served through Together AI’s API, runs $0.88 per million tokens for both input and output [S3]. Llama 4 Maverick is lower still at $0.27 input and $0.85 output [S3].

At high volume, this gap compounds. To illustrate: a workload generating $25,000 per month in Claude Sonnet output costs would run under $1,500 at Llama 3.3 70B’s per-token rate through an open-weight API provider, assuming comparable token volume and acceptable output quality for the task [S2, S3]. The actual savings depend on whether the open-weight model meets your quality bar without requiring more tokens or additional post-processing to reach the same result.

But margin pressure alone does not tell you what to migrate to. It tells you that staying on managed frontier APIs at scale is expensive. Whether self-hosting is actually cheaper — or whether an open-weight API provider is the better move — requires a different analysis entirely.

The Cost Myth: Open-Weight Is Not Automatically Cheaper

This is where most migration conversations go wrong.

The default assumption is that running your own GPUs is cheaper than paying per token. At sufficient scale, that can be true. But “sufficient scale” is higher than most teams realize, and the total cost of self-hosting extends well beyond the GPU bill.

Consider the numbers directly. Self-hosting Llama 3.1 405B on an 8xH100 node costs approximately $5.47 per million output tokens at moderate utilization. The Together AI API serves the same model — same weights, same architecture — for $3.50 per million output tokens [S7]. At moderate volume, self-hosting is more expensive than calling someone else’s API for the identical model.

The gap exists because of utilization and overhead. At moderate request volume, GPUs sit partially idle — you are paying for 24/7 capacity to serve variable demand. Add the operational cost: one senior MLOps engineer at $200K+ per year consumes the equivalent of roughly 4,000 H100-hours in salary alone [S7]. That is before monitoring, failover, model updates, and on-call rotations.

One widely cited threshold puts the self-hosting cost crossover at approximately 10 billion tokens per month [S7]. The actual breakeven for any given team depends on model size, GPU generation, utilization rate, and operational overhead — but the order of magnitude is instructive. Below that range, APIs — whether managed or open-weight — are likely cheaper.

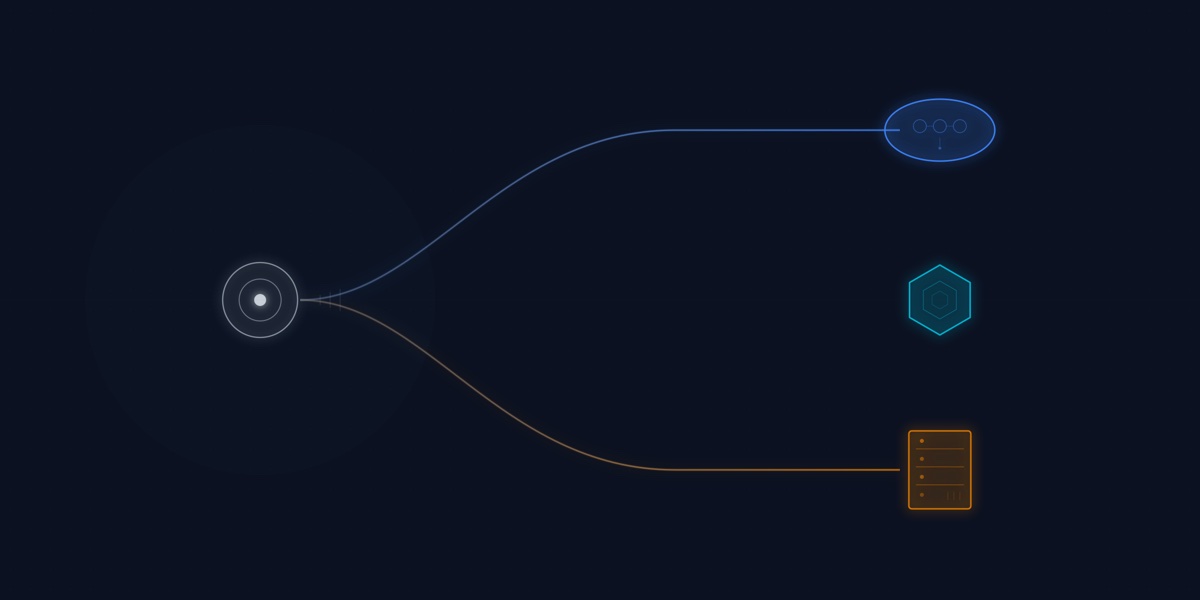

This analysis reveals something that most migration articles miss: there are not two deployment modes. There are three.

- Managed API (OpenAI, Anthropic): Highest per-token cost. Zero infrastructure overhead. Frontier model quality.

- Open-weight via API (Together AI, Fireworks, Groq): 3–5x lower per-token cost [S3]. Still zero infrastructure overhead. Open-weight model quality.

- Self-hosted (vLLM, SGLang on your GPUs): Variable cost depending on volume. Full infrastructure burden. Full control over data and model.

The common mistake is collapsing the second and third categories into a single “open-weight” option. That conflation is why teams miscalculate. A team that sees Llama 3.3 70B at $0.88/M tokens [S3] and assumes they need to provision GPUs to access it is solving the wrong problem. The open-weight-via-API tier delivers the cost benefit without the operational burden — and for many teams, it is the right first move.

If cost alone does not justify self-hosting, and migration is not automatically cheaper, then the real question is not whether to migrate. It is how to decide.

A Decision Framework, Not a Slogan

“Migrate to open-weight” is not a strategy. It is a slogan that obscures three genuinely different decisions with different risk profiles, cost structures, and operational requirements. What follows is a framework for converting those inputs into a concrete choice.

When to Stay on Managed APIs

Managed APIs remain the right choice when three conditions hold: your volume is well below the range where per-token costs dominate your margins, your workloads require frontier-level reasoning that open-weight models do not yet match, and you face no regulatory forcing function for data residency.

This is a legitimate long-term position, not a failure to migrate. For tasks like multi-step agentic workflows, complex code generation, and open-ended reasoning across large contexts, managed frontier models still lead. If your monthly token volume is well under 10 billion tokens [S7] and your data classification allows third-party processing, the simplicity of a managed API is a genuine operational advantage.

When to Use Open-Weight via API

This is the option most teams overlook.

If your primary motivation is cost reduction but you lack the infrastructure team to self-host, open-weight models served through third-party inference APIs offer the middle path. The quality gap that once justified the managed API premium has narrowed substantially — at least for a meaningful class of production tasks. On the LMSYS Chatbot Arena, which ranks models through blind human preference voting, Llama 3.1 405B holds an Elo rating of 1504, exceeding GPT-4o at 1471. Llama 3.3 70B sits at 1489, also above GPT-4o [S4]. These are general preference rankings, not guarantees of parity on every task — but they indicate that for workloads like classification, extraction, summarization, and structured output generation, models in the 70B parameter range can deliver comparable results at a fraction of the per-token cost [S3].

You get the savings without provisioning a single GPU.

When to Self-Host

Self-hosting is the right decision when at least one of three conditions applies: regulatory requirements mandate that inference data never leaves your infrastructure, your token volume exceeds the range where self-hosting becomes cost-competitive [S7], or your product strategy requires fine-tuning or distillation on proprietary data.

The operational cost is real. But for teams in this category, the alternative — accepting compliance risk, paying unsustainable API margins, or forgoing fine-tuning entirely — is worse.

The tooling has matured enough to make this viable. vLLM achieves 85-92% GPU utilization through PagedAttention and delivers time-to-first-token of 123ms on Llama 3.1 70B running on a single H100 [S9]. SGLang reaches 460 tokens per second at batch size 64 on the same hardware [S9]. These are production-grade throughput numbers on current-generation infrastructure.

Self-hosting in 2026 is an infrastructure problem, not a research problem. The question is whether your team has the operational capacity to treat it as one.

The Hybrid Architecture

In practice, many production teams should not commit to a single deployment mode. They should route traffic across modes based on two dimensions: task complexity and data sensitivity.

Route by task complexity. A request that classifies a support ticket into one of twelve categories does not need the same model that generates a multi-step financial analysis. Route classification, extraction, summarization, and structured output tasks to Llama 3.3 70B via an open-weight API provider at $0.88 per million tokens [S3] — or self-hosted via vLLM if volume and compliance warrant it. Reserve managed frontier APIs for multi-step agents, complex code generation, and tasks where the quality difference between open-weight and frontier models affects the end result.

On general preference benchmarks like the LMSYS Arena, open-weight models at the 70B+ parameter range score competitively with GPT-4o [S4]. The remaining performance gap is concentrated in cutting-edge reasoning and complex multi-turn tasks — precisely the workloads that justify the managed API premium, and typically a minority of total token volume.

Route by data sensitivity. Customer records, PII, health data, and anything subject to regulatory scrutiny should route to self-hosted inference within your jurisdiction. Internal operational data, non-sensitive content processing, and development workloads can route to open-weight or managed API providers without the same constraints.

The implementation is a routing layer — an API gateway or lightweight dispatcher — that examines the task type and data classification of each request and directs it to the appropriate backend. This is the same pattern teams use for database read replicas, CDN routing, and tiered storage. The routing dimensions are task complexity and data sensitivity rather than latency and geography.

A concrete example: a team sends 70% of token volume to Llama 3.3 70B through an open-weight API at $0.88/M for classification and extraction tasks, 20% to a self-hosted instance for workloads involving regulated data, and 10% to Claude Sonnet at $3.00/$15.00 for complex reasoning [S1, S2, S3]. The blended cost is a fraction of routing everything through a single managed frontier API — and the compliance posture is stronger because regulated data never leaves controlled infrastructure.

What This Means for Your Team

Before choosing a migration path, answer three questions:

-

What is your token volume? Below roughly 10 billion tokens per month, self-hosting is unlikely to save money over open-weight API providers. But open-weight via API may still reduce your spend significantly compared to managed frontier APIs. Volume determines which cost curve you are on.

-

What is your compliance posture? If your production data is regulated — GDPR, HIPAA, or sector-specific requirements — the migration decision may already be made for you, independent of cost. Self-hosting eliminates third-party data processing. Open-weight API providers may or may not meet your data residency requirements depending on where they run their infrastructure.

-

What is your quality floor? For tasks where open-weight models perform comparably to frontier models on established benchmarks — classification, extraction, summarization, structured output — there is no quality argument for paying the managed API premium. For tasks where frontier models still lead, the premium is justified. Most production systems have both types of workload, which is why the hybrid architecture is usually the right answer.

These questions are simple to state. They are harder to answer without a clear picture of your current workloads, token volumes, data classification, and regulatory exposure. That is what an infrastructure audit is for.