Why Traditional Testing Fails for LLM Outputs

A traditional unit test asserts that f(x) == y. The same input produces the same output every time. LLM outputs do not work this way.

The instinct is to set temperature=0 and treat the model as deterministic. It is not. Research across GPT-3.5 Turbo, GPT-4o, Llama 3 8B, Llama 3 70B, and Mixtral 8x7B shows accuracy variations of up to 15% across 10 identical runs at temperature=0 with fixed seeds [S4]. In a worst-case example, GPT-4o scored 88% on its best run of a college math benchmark and 44% on its worst — a 44-point gap from the same prompt, same parameters, same model [S4]. Token-level agreement across runs can drop as low as 4.6% [S4].

This means every assertion that depends on exact output matching is structurally fragile. Teams respond in one of two ways: they stop testing LLM outputs entirely and rely on manual review in staging, or they write brittle regex and substring checks that break on every model update. Both approaches let regressions reach production undetected.

What is needed is a graduated methodology — one that applies deterministic assertions where possible, model-graded evaluation where necessary, and statistical thresholds to absorb the variance that remains.

What You Need Before Starting

This playbook assumes you have the following in place:

Prerequisites:

- A production or near-production LLM integration (API or self-hosted)

- An existing CI pipeline (GitHub Actions, GitLab CI, or equivalent)

- Familiarity with pytest

Tooling:

- pytest

- An LLM client SDK (OpenAI, Anthropic, or equivalent)

- Structured output support (JSON mode, function calling, or the Instructor library)

- Optional: DeepEval, Ragas, or LangSmith for eval framework integration

If you have these, you can implement every layer described below.

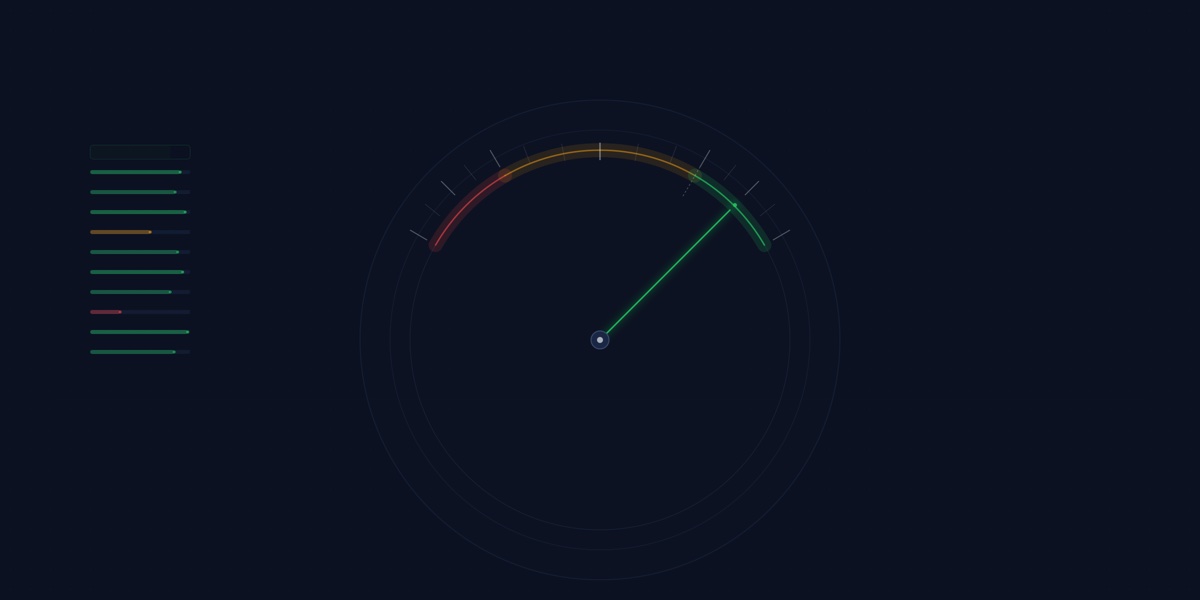

The Testing Hierarchy

The core principle is simple: prefer deterministic checks and escalate only when they cannot reach. Anthropic’s evaluation documentation frames this as a grading hierarchy — code-based grading first (fastest, most reliable), LLM-based grading second (“test to ensure reliability first then scale”), human grading last (“avoid if possible”) [S5].

This playbook translates that hierarchy into three concrete testing layers. Each layer catches a different class of failure. Most teams should start at Layer 1 and add layers as their test suite matures.

Layer 1: Structured Outputs and Deterministic Assertions

This is the highest-leverage change you can make. If you constrain the LLM’s output to a schema, you can test it with standard assertions.

Structured outputs — via JSON mode, function calling, or the strict: true parameter — use constrained decoding to guarantee that every response conforms to a developer-supplied JSON schema. GPT-4o with structured outputs scores 100% on complex schema conformance evaluations [S3]. Without constrained decoding, earlier models scored below 40% on the same schemas, and prompt engineering alone only raised compliance to roughly 93% [S3]. On harder schemas, unconstrained generation drops to 38-65% compliance [S8].

Constrained decoding also improves the content within the schema. Benchmarks show task accuracy improvements of up to 4% and generation speedups of approximately 50% compared to unconstrained approaches [S8]. You get better testability and better outputs.

Once the LLM returns a valid, typed object, you can write standard pytest assertions — the same way you test any function return:

from pydantic import BaseModel, Field

from openai import OpenAI

class TicketClassification(BaseModel):

category: str = Field(description="One of: billing, technical, account, other")

priority: str = Field(description="One of: high, medium, low")

requires_escalation: bool

client = OpenAI()

response = client.responses.parse(

model="gpt-4o",

input=[{"role": "user", "content": "My payment failed and I'm locked out of my account"}],

text_format=TicketClassification,

)

result = response.output_parsed

def test_ticket_classification():

assert result.category in ["billing", "technical", "account", "other"]

assert result.priority in ["high", "medium", "low"]

assert isinstance(result.requires_escalation, bool)

assert result.category == "billing" # domain-specific assertionThis layer catches: parsing failures, missing fields, type errors, out-of-range values, enum violations. It eliminates the entire class of failures caused by unstructured output variance.

Layer 2: Model-Graded Evaluation

Some qualities cannot be captured by field assertions: relevance, helpfulness, tone, completeness, factual accuracy in free-text responses. For these, use a second LLM as a judge.

This sounds unreliable. It is more reliable than you expect. On the MT-Bench evaluation suite, GPT-4 as a judge achieves approximately 85% agreement with human evaluators — exceeding inter-human agreement of 81-82% measured on the same benchmark [S2]. The LLM judge is not perfect, but it is consistent enough for automated CI when properly calibrated.

Three implementation decisions matter:

Use binary pass/fail, not scoring scales. Multi-point rubrics (1-5 scales) introduce ambiguity that compounds across evaluators. “People don’t know what to do with a 3 or 4” [S7]. A binary “does this response meet the criteria: yes or no” is easier to calibrate, easier to threshold, and more stable across runs.

Calibrate against a domain expert. Start with approximately 30 examples graded by a single domain expert — not a committee. Iterate on the judge prompt until you reach at least 90% agreement, which is typically achievable in three iterations [S7]. Avoid off-the-shelf evaluation metrics without this validation step — pre-built scorers tend to “not correlate with domain expert judgments” [S7].

Mitigate known biases. LLM judges exhibit position bias (GPT-4 is consistent 65% of the time when answer order is swapped; Claude-v1 only 23.8%) [S2], verbosity bias (GPT-3.5 is fooled by verbose responses 91.3% of the time, compared to 8.7% for GPT-4) [S2], and self-enhancement bias (GPT-4 inflates its own win rate by approximately 10%) [S2]. Mitigations: swap answer order and average, use GPT-4 or stronger as the judge model, and require chain-of-thought reasoning before the judgment — this alone reduces math/reasoning grading failure from 70% to 30% [S2].

This layer catches: hallucination, irrelevance, tone drift, incomplete answers, factual errors in free-text responses.

Layer 3: Statistical Pass-Rate Thresholds

Even with Layers 1 and 2 in place, individual test runs will occasionally fail due to non-deterministic variance — not regressions. A single-shot test that fails is ambiguous: is this a real problem, or did the model just produce an unusual output on this run?

The data is clear: single-shot testing agrees with multi-sample ground truth only 92.4% of the time [S9]. That means roughly 1 in 13 runs gives you the wrong signal — either a false pass or a false fail. At temperature=0, 9.5% of prompts exhibit decision flips across runs; at temperature=1.0, that rises to 19.6% [S9].

The solution is to run N samples per test case and assert that at least K pass. Research suggests a minimum of 3 samples per prompt for 95% reliability [S9]. In practice, “8 of 10 runs must pass” is a common threshold for high-confidence assertions. Adjust N and K based on the acceptable flake rate for your pipeline.

This layer is what turns a noisy test suite into a reliable CI gate. Without it, teams lose trust in their evals after the third false failure — and once trust is gone, the test suite is ignored.

This layer catches: false negatives from non-deterministic variance, flaky tests that erode confidence in the suite.

Running This in CI

The testing layers above are only useful if they run automatically. The goal is evaluation on every pull request — not nightly, not manually, not “when someone remembers.”

Two integration patterns cover most setups:

Pytest-native. DeepEval is built directly on pytest. You write test functions using assert_test(), define metrics (G-Eval for custom criteria, Faithfulness for RAG, JSON Correctness for schema checks), and run them with deepeval test run. It supports parallel execution with -n 4 for throughput [S1]. This approach fits teams that already run pytest in CI and want LLM evals to live alongside their existing test suite.

Config-driven. Promptfoo provides a GitHub Action (promptfoo/promptfoo-action@v1) that triggers on PRs modifying prompt files. It runs a before-and-after comparison of prompt performance and generates a viewer link with evaluation metrics [S6]. This approach fits teams that want prompt evaluation decoupled from application tests, configured in YAML rather than Python.

Whichever pattern you use, four operational practices keep the pipeline reliable:

Separate eval tests from unit tests. Use a dedicated test directory or pytest marker (@pytest.mark.llm_eval) with longer timeouts. LLM evals are slower and more expensive than unit tests — mixing them causes frustration when a fast test suite suddenly takes minutes.

Scope the trigger. Run evals on prompt changes, model config changes, and retrieval pipeline changes — not on every code commit. A change to your CSS should not trigger an LLM evaluation pass. In GitHub Actions, use paths: filters on prompts/** or config/**.

Fail on regression, not on absolute thresholds. Compare each eval run against a baseline from the last known-good state. A prompt change that drops the model-graded pass rate from 9.2/10 to 8.5/10 is a signal worth investigating. A prompt that scores 8.5/10 against an arbitrary “must be above 8.0” threshold is not — it blocks improvements that shift the distribution even slightly. Store baselines in version control alongside your prompts.

Report results as PR comments. Eval results buried in build logs are invisible to reviewers. Surface them as PR comments with pass/fail summaries, score comparisons against baseline, and links to detailed results. Both Promptfoo and LangSmith support this pattern natively.

A note on cost: model-graded evals call an LLM judge, which adds latency and API spend. Budget for this. Caching responses where the input hasn’t changed, running evaluations in parallel, and scoping triggers to relevant file changes keep it manageable. For most teams, the eval cost is a fraction of the production inference cost — and far cheaper than shipping a regression.

Defining Your Pass/Fail Contract

This is the hardest part of LLM testing, and where most teams get it wrong. Without a clear pass/fail contract, the eval suite becomes either a security blanket (thresholds so low they never fail) or a noise generator (thresholds so high they always fail). Both outcomes destroy trust, and a test suite no one trusts is a test suite no one runs.

The contract should define what “passing” means at each testing layer:

Schema compliance (Layer 1): 100% pass required. The output conforms to the schema or it does not. There is no acceptable failure rate for schema violations when you are using constrained decoding — if the schema check fails, something is broken in the integration, not in the model’s judgment.

Deterministic field assertions (Layer 1): 100% pass required. If the extracted field value is wrong — a category that doesn’t match the input, a priority that violates business rules — the test fails. These are not probabilistic checks. They are correctness checks on structured data, and they should be treated the same way you treat any unit test assertion.

Model-graded quality (Layer 2): pass-rate threshold. A single failed LLM-as-judge evaluation on one run could be judge variance, not a real regression. Require a pass rate — 8 of 10 or 9 of 10 — calibrated to the criticality of the task. Customer-facing summarization might warrant 9/10. Internal log classification might tolerate 7/10.

Regression detection: baseline comparison, not absolute scoring. The most useful signal is whether a change made things worse relative to the last known-good state. Track a baseline pass rate for each eval suite and flag regressions as PR-blocking failures. Absolute thresholds (“quality must be above 8.0”) are brittle because they don’t account for the natural distribution of model outputs and they block improvements that shift the distribution even marginally.

Three anti-patterns to avoid:

Uniform thresholds across layers. Schema compliance and subjective quality are fundamentally different kinds of checks. Applying the same pass/fail logic to both guarantees that one is too strict and the other too lenient.

Stale baselines. The baseline should advance with intentional improvements. When a prompt change demonstrably improves quality, update the baseline. A baseline that never moves penalizes progress.

Treating all failures as blockers. Some eval failures are warnings — worth investigating but not worth blocking a deploy. Schema failures and deterministic assertion failures should block. A model-graded quality check that drops from 9.1/10 to 8.9/10 on a low-criticality task should warn. Distinguish between these in your CI config.

Building and calibrating this contract requires understanding your workloads, your quality bar, and your tolerance for different failure modes. The playbook gives you the layers. The contract makes them operational.