The Prompt Engineering Ceiling

Ask most teams how they improve their LLM system, and the answer is the same: change the prompt. Add more instructions. Add examples. Add chain-of-thought. Add guardrails. The prompt grows from 200 tokens to 2,000 tokens, and the team calls it engineering.

It works — until it doesn’t.

Prompts are brittle in ways that are hard to see until they break. Research across GPT-3.5, GPT-4, Llama 2, and Llama 3 shows that minor rephrasing of the same instruction can swing accuracy by up to 463% [S4]. Llama-2-70B scored 9.4% on one phrasing and 54.9% on another — same task, same model, same data [S4]. There is zero consistency in which phrasings fail across models, meaning a prompt optimized for GPT-4 may perform poorly on GPT-4o or Claude without any way to predict the failure in advance [S4].

This fragility compounds at model upgrade boundaries. Migrating prompts across model versions causes approximately 10% performance drops, requiring full re-evaluation [S10]. The authors of “What We Learned from a Year of Building with LLMs” describe it bluntly: “Migrating prompts across models is a pain in the ass” [S10].

And in RAG systems — where most production LLM workloads live — prompt techniques often make things worse, not better. A systematic evaluation across six datasets found that most advanced prompt techniques underperformed the plain zero-shot baseline when applied to RAG [S1]. GPT-3.5 with RAG performed worse than standalone on four of six datasets [S1]. The prompts weren’t bad. The retrieval was the bottleneck.

If the prompt isn’t the lever, what is?

Where Performance Actually Lives

Retrieval Quality

The most common RAG failure is not a prompt failure. It is a retrieval failure: “the right document exists in your corpus, but the system doesn’t surface it” [S5].

When retrieval fails, no amount of prompt engineering can compensate. The model generates a confident, well-structured answer — grounded in the wrong context. Worse, research shows that distracting documents (retrieved but irrelevant) produce the poorest correctness scores while simultaneously showing the highest model confidence [S1]. The failure mode is deceptive: the system looks like it’s working.

The retrieval fixes are well understood and measurable. Hybrid search — combining BM25 keyword matching with vector similarity — improves retrieval recall by 3 to 3.5x and raises end-to-end answer accuracy by 11-15% on complex reasoning tasks [S5]. Re-ranking the retrieved results with a cross-encoder model produces 10-40% precision improvements in mature implementations [S5]. These are not prompt changes. They are infrastructure changes — and their effects are larger, more durable, and more transferable across models.

Adding more documents to the context window does not solve the problem. Even with perfect retrieval (100% recall), error rates persist at 6-12% of instances [S1]. And adding documents beyond what’s relevant actively degrades performance: approximately 1% error increase per five additional documents in the context, even as recall increases [S1]. More context is not better context.

Chunking Strategy

How you split documents before indexing has a larger effect on output quality than most teams realize.

A peer-reviewed study on clinical decision support systems compared four chunking strategies — adaptive, proposition-based, semantic, and fixed — with no changes to the LLM or prompt [S3]. Adaptive chunking achieved 50% fully accurate responses. Fixed chunking achieved 13%. That is a 37-percentage-point accuracy gap from a preprocessing decision alone, with a Cohen’s d of 1.03 (large effect size, p = 0.001) [S3]. Recall jumped from 0.40 with fixed chunking to 0.88 with adaptive chunking — again, with no model or prompt changes [S3].

Chroma’s controlled evaluation across 328,208 tokens and 472 queries found a 9% recall gap between the best and worst chunking strategies on the same corpus [S2]. Notably, OpenAI’s documented defaults — 800-token chunks with 400-token overlap — scored lowest across all metrics [S2].

Chunk size is not one-size-fits-all. NVIDIA’s benchmarks show that factoid queries perform best with 256-512 token chunks, while complex analytical queries benefit from 1,024 tokens or page-level chunking [S7]. The 128-token size underperformed significantly; 2,048 tokens showed diminishing returns [S7]. The optimal chunking strategy depends on the query distribution, not on a universal default.

The implication is clear: teams spending weeks refining prompts while using default chunking settings are optimizing the wrong layer.

The Data Pipeline as Architecture

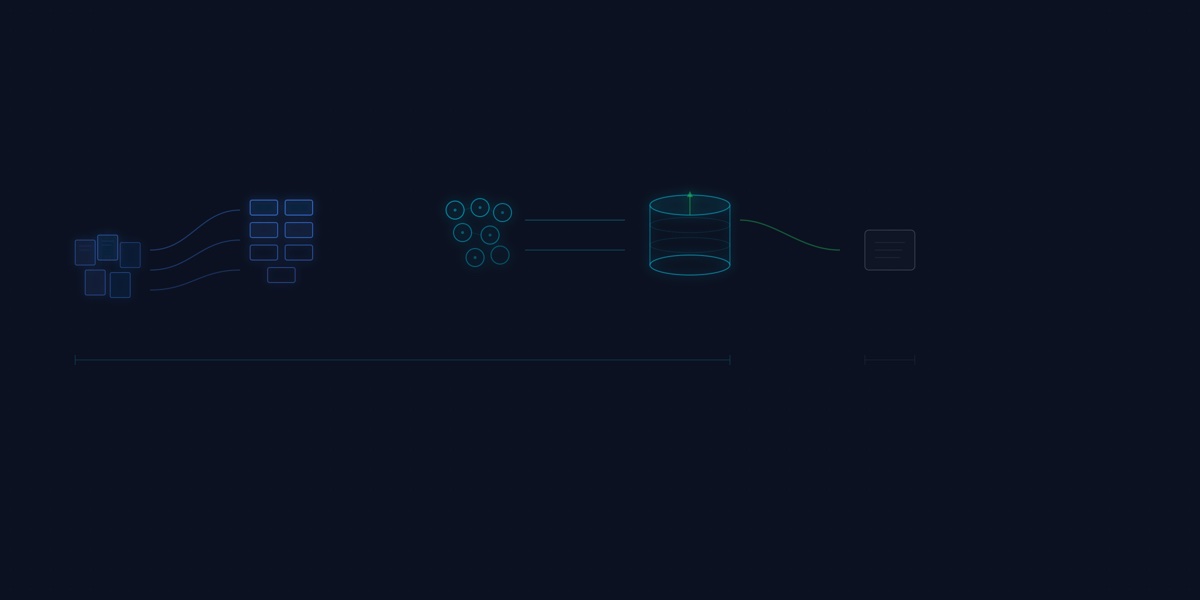

The RAG stack is not a prompt with a search API bolted on. It is a data pipeline. LlamaIndex’s architecture documentation describes five sequential stages — loading, transformations, indexing, retrieval, and generation — and explicitly draws the parallel: “This has parallels to data cleaning/feature engineering pipelines in the ML world, or ETL pipelines in the traditional data setting” [S8].

Four of those five stages happen before the prompt touches the LLM. The data connectors ingest from heterogeneous sources. The transformation layer splits documents into chunks, extracts metadata, and generates embeddings. The indexing layer writes to vector stores with configurable similarity metrics. Each of these stages has tunable parameters that directly affect output quality — and each can be cached, versioned, and incrementally reprocessed [S8].

This is not a prompt engineering problem. It is a data engineering problem with familiar patterns: ingestion, transformation, quality validation, indexing, and retrieval optimization. The skills that matter are the same skills that matter in any data-intensive system.

The Evidence: Data Engineering Beats Prompt Engineering

The pattern repeats across industries and scales.

Amazon Finance Q&A. A financial question-answering system improved accuracy from 49% to 86% — a 37-percentage-point gain. The primary drivers were iterative improvements to document chunking and embedding model selection. Data pipeline modifications were the primary lever, not prompt changes [S9].

Snowflake SEC Filings. On financial document analysis, optimized retrieval pipelines with tuned chunk sizes narrowed the quality gap between Llama 3.3 70B and Claude 3.5 Sonnet [S6]. The engineering conclusion: “Chunking and retrieval strategies are far more impactful on output quality than the raw computational power of the generative language model” [S6]. Better data engineering substituted for a more expensive model.

Clinical Decision Support. Moving from fixed to adaptive chunking improved medical accuracy from 13% to 50% fully accurate with no changes to the LLM or prompt [S3]. A pure data preprocessing change produced a large, statistically significant effect on clinical output quality.

The cost implication is significant. If retrieval engineering can close most of the quality gap between a $0.88/M-token open-weight model and a $15.00/M-token managed API, then data engineering investment has a direct, measurable return in reduced inference costs. Prompt optimization does not offer the same leverage — it must be repeated for every model upgrade, and its effects are model-specific and non-transferable.

What This Means for Your Architecture

The hierarchy of engineering effort for production LLM systems should be:

- Data quality — are the right documents in your corpus, correctly parsed, with clean metadata?

- Retrieval architecture — are you using hybrid search, re-ranking, and appropriate similarity metrics?

- Chunking strategy — are your chunk sizes calibrated to your query distribution, not provider defaults?

- Embedding selection — are you using an embedding model suited to your domain and document types?

- Prompt design — is the prompt clear, minimal, and focused on a single task?

Most teams work this list from the bottom up. The evidence says to work it from the top down.

This does not mean prompts are irrelevant. A clear, well-structured prompt matters. But a clear prompt over bad retrieval produces confident, well-formatted wrong answers. Good retrieval with a simple prompt produces accurate answers — and those answers survive model upgrades, because the quality lives in the data layer, not in model-specific instruction tuning.

The practical starting point: before you rewrite your prompt for the third time, check whether your retrieval pipeline is surfacing the right documents. Audit your chunking strategy against your actual query distribution. Measure retrieval recall and precision before you measure generation quality. If the retrieval is broken, everything downstream is noise.

RAG is a data problem. Treat it like one.